A major change is hitting artificial intelligence as Alibaba is placing a big bet on what comes next. The Chinese tech giant has led a $290 million investment into startup ShengShu, aiming to push beyond the limits of today’s chatbot-driven systems.

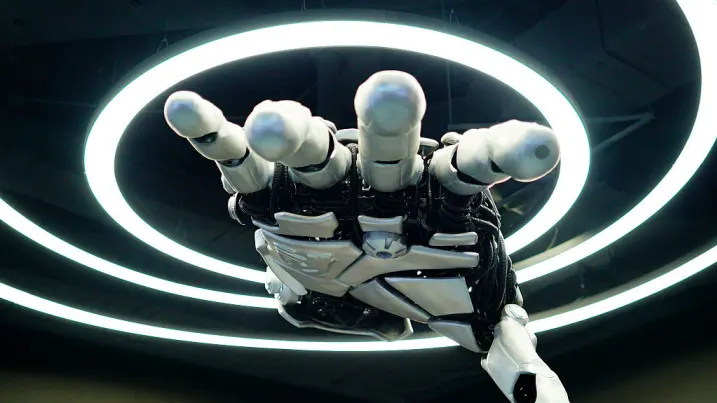

At the center of this move is a new concept known as “world models.” Unlike traditional AI systems such as ChatGPT, which are trained mostly on text, world models rely on richer, real-world data—video, sound, and even physical interactions. The goal is simple but ambitious: build AI that understands how the world actually works, not just how language is structured.

Alibaba’s investment, totaling 2 billion yuan, comes just weeks after ShengShu secured an earlier round of 600 million yuan from backers including Qiming Venture Partners. The latest funding also drew participation from TAL Education and Baidu Ventures.

ShengShu says it plans to use the funds to develop a “general world model.” This system is designed to bridge two spaces that have long remained separate: digital environments like video generation and gaming, and physical applications such as robotics and autonomous driving.

“ShengShu believes that a general world model, built on multimodal data such as vision, audio, and touch, more naturally captures how the physical world works than large language models,” the company said.

Its founder, Zhu Jun, added, “We aim to connect perception and action,” pointing to a future where AI can better predict and respond to real-world situations.

The company has already made progress. Its latest system, Vidu Q3 Pro, launched in January, ranks among the top AI tools for turning text and images into video, according to industry trackers. ShengShu even rolled out its Vidu platform globally ahead of OpenAI’s now-discontinued Sora video tool.

Competition in this space is heating up fast. Chinese tech players like Kuaishou and ByteDance have also released AI tools capable of generating video content.

Alibaba is not stopping with ShengShu. The company has been steadily building a portfolio around world model technologies. Recently, it teamed up again with Baidu Ventures to invest $50 million in Tripo AI, a startup that converts photos into digital 3D models using AI grounded in physical space.

Earlier, Alibaba also led a $60 million round for PixVerse, which introduced tools allowing users to guide how AI-generated videos unfold in real time.

These investments reflect a broader belief: the next leap in AI will depend on machines that can interact with the physical world. Alibaba has already released open-source video models and, earlier this year, introduced AI systems designed to power robots.

ShengShu is aligning with that direction. The company says it has formed partnerships with firms developing embodied AI—technologies like humanoid robots that can operate in homes, factories, and businesses.

Industry voices are also pointing to this shift. Kevin Kelly recently argued that world models are essential for robotics because language-based systems alone cannot handle real-world complexity.

According to Kelly, achieving human-like intelligence in AI will require three core elements: reasoning, understanding of the physical world, and continuous learning. While chatbots have advanced the “knowledge” aspect, the physical understanding piece remains underdeveloped—making world models a critical frontier.